List of my tools and data

- Fine-tuned w2v2 models for the Interspeech 2022 paper

Downloadable w2v2 models fine-tuned for SLU on MEDIA and FSC, used for the Interspeech 2022 paper.

See here for extracting features

- Data for the system described in the paper submitted at Interspeech 2021

In this page I provide input features used for the experiments described in the paper accepted at Interspeech 2021, and for the submission at NeurIPS 2021, including also wav2vec 2.0 models fine-tuned on MEDIA and features extracted with such models. For a description of the system please see our git repository for Interspeech 2021.

- TarcMTS

Multi-task sequence-to-sequence Fairseq system

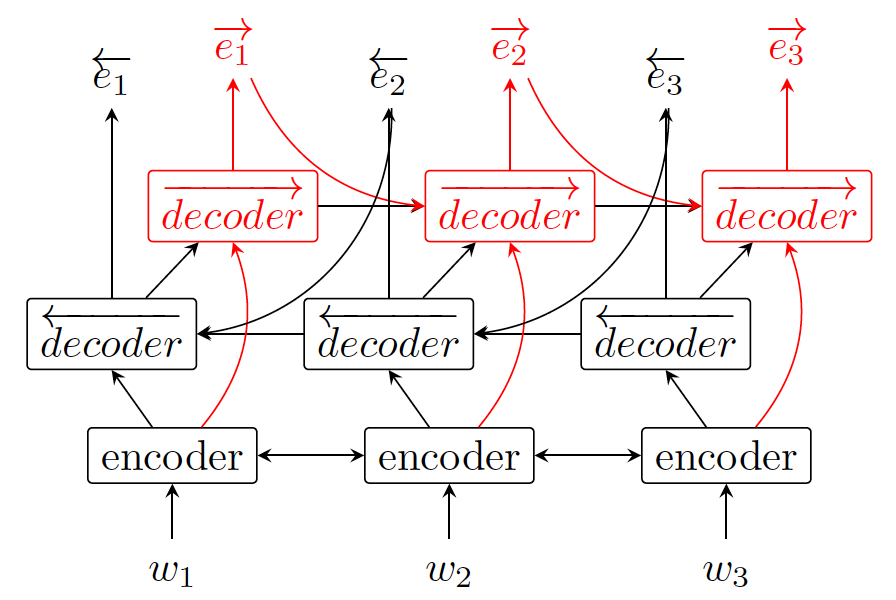

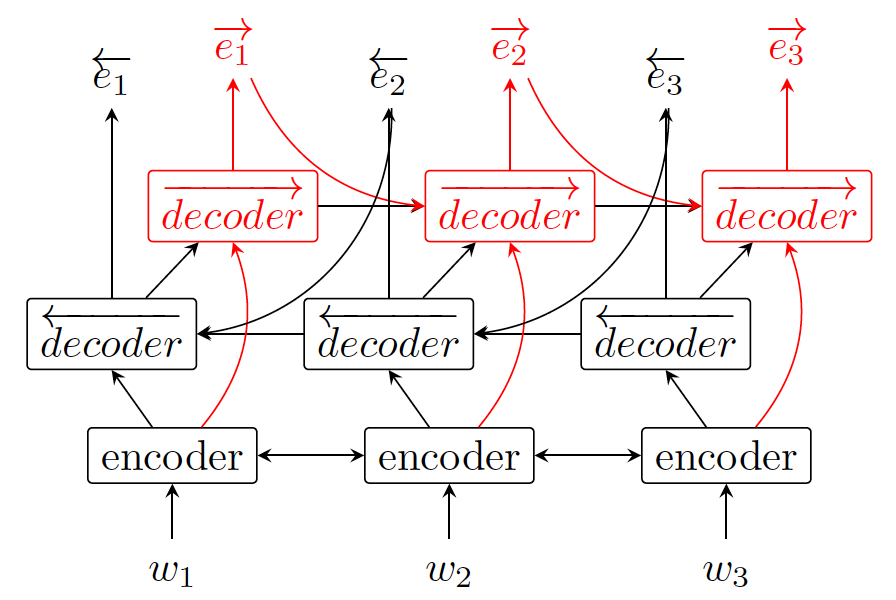

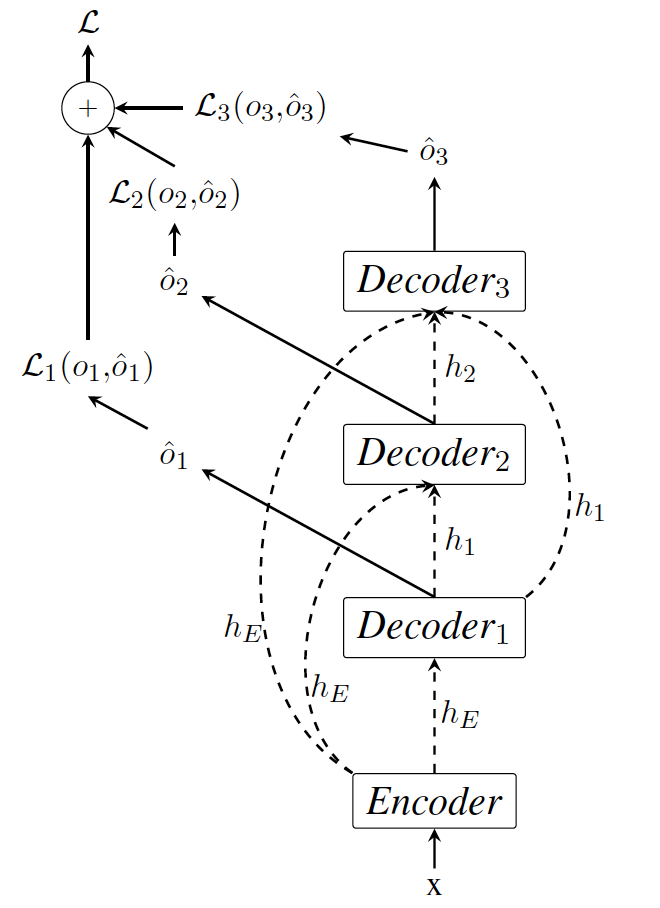

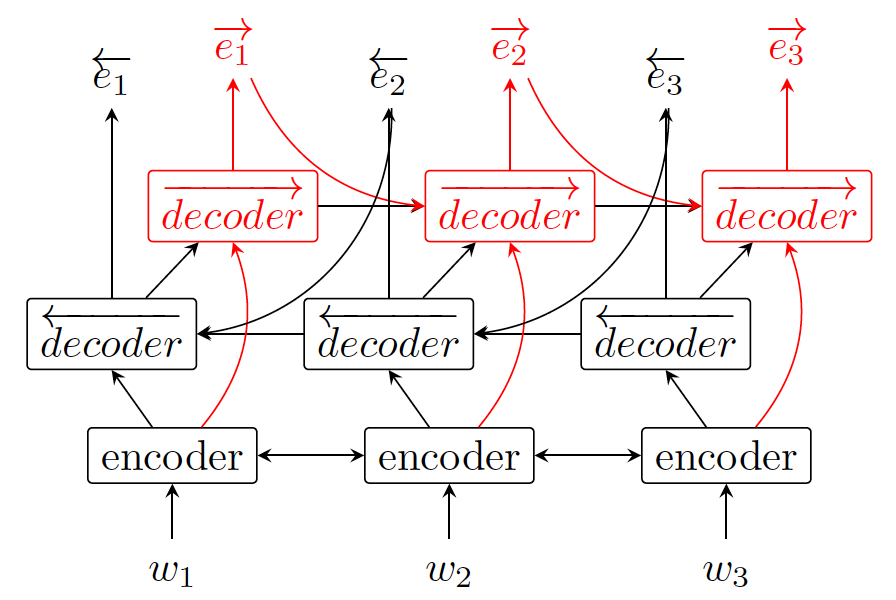

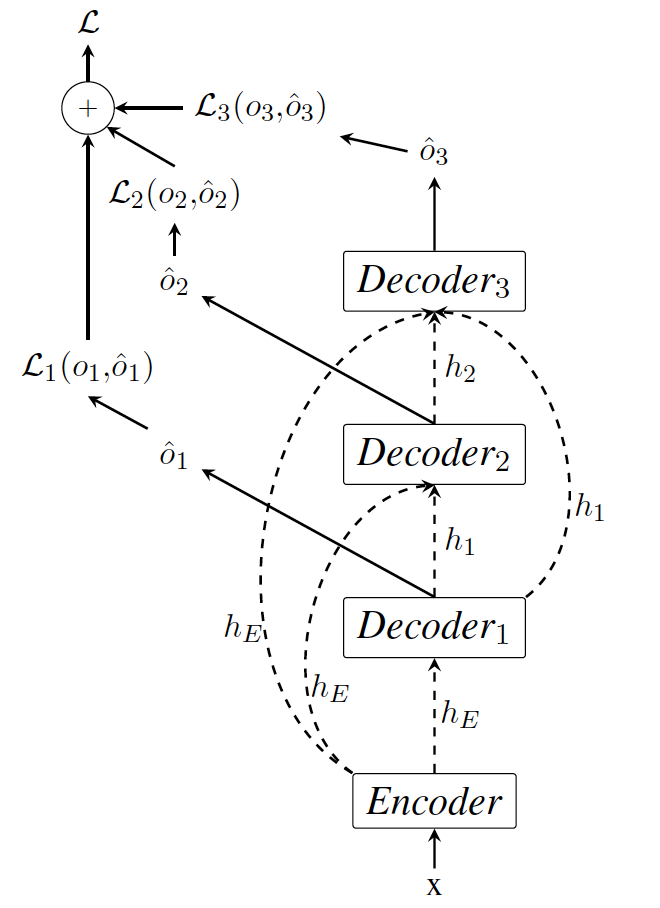

- Seq2Biseq - Bidirectional Output-wise Recurrent Neural Networks for Sequence Modelling

Bidirectional backward-forward decoding, sequence-to-sequence tagger

Downloadable w2v2 models fine-tuned for SLU on MEDIA and FSC, used for the Interspeech 2022 paper.

See here for extracting features

In this page I provide input features used for the experiments described in the paper accepted at Interspeech 2021, and for the submission at NeurIPS 2021, including also wav2vec 2.0 models fine-tuned on MEDIA and features extracted with such models. For a description of the system please see our git repository for Interspeech 2021.

Multi-task sequence-to-sequence Fairseq system

Bidirectional backward-forward decoding, sequence-to-sequence tagger